From Chatbots to Agentic AI: How Servicely Is Turning Service Platforms Into Systems That Do the Work

Category

Category

For years, AI in IT service management has meant narrow use cases. A bit of predictive ticket routing here, some knowledge search there, maybe a chatbot on the portal.

Now we are entering a very different phase.

Agentic AI is about systems that act on your behalf. They do not just generate text or suggest answers. They understand context, choose the right tools, break work into steps and execute those steps safely inside (and outside) your service platform.

In a recent technical preview, the Servicely team showed how agentic AI is being embedded directly into the Servicely platform to move beyond chatbots and into true, AI-driven operations.

This article recaps the concepts and live scenarios from that session and what they mean for IT leaders.

From Servicely’s perspective, agentic AI in service management is about three main shifts:

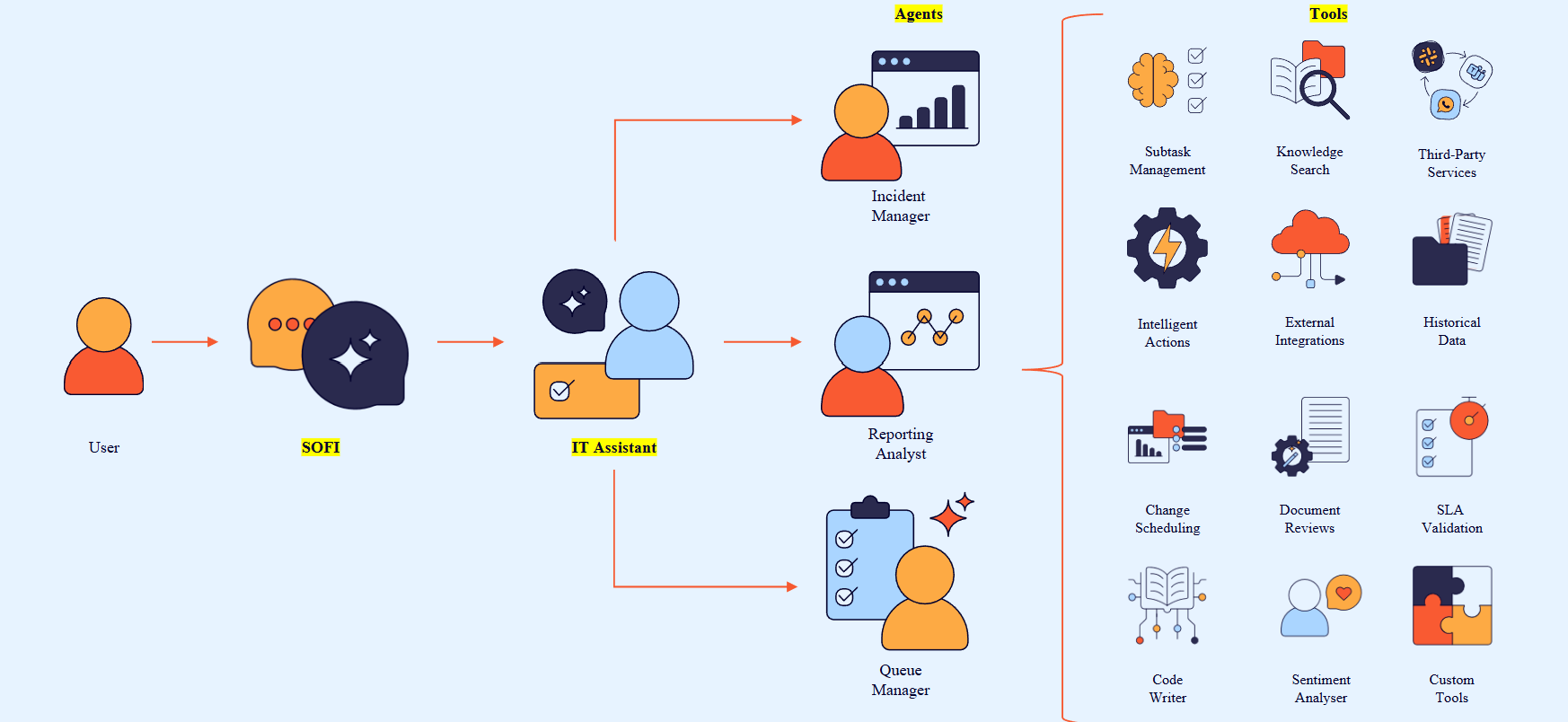

Rather than a single “AI brain”, Servicely uses a layered agentic stack:

This is what turns AI from a help desk add-on into a real operational engine.

The best way to understand the stack is to see how the layers fit together.

At the top is the SOFI UI. This is where users type questions or requests such as:

SOFI sends these to the right assistant, which then coordinates how work gets done.

Assistants sit between the conversation and the low-level tools. Each assistant has a clear domain. For example:

The assistant decides:

Agents are task-focused actors. You might have:

Each agent can call a dedicated toolbox. For example:

This layered model is critical for governance. You control which tools each agent can use, and which assistants can use which agents, so you do not end up with free-floating automation that bypasses policy or permissions.

The first set of scenarios focused on simple but common needs: looking up information and initiating basic requests.

A user can ask SOFI things like:

“Who is Sam's manager?”

“What is Sam’s email address and phone number?”

The organisational structure assistant activates user data agents and tools that query the Servicely platform. The result is a clean, conversational answer that still respects the organisation’s data model and permissions.

From there, the user can go further:

“I have an expense claim to submit for the engineering team. Who would approve it?”

“Is there a catalog item for this?”

The system:

This is agentic AI working as guided self service. It is not just “chat”; it is:

The next set of scenarios focused on the service desk agent experience.

An agent can start with a simple question:

“How much work do I have at the minute?”

The agent workload assistant activates, looks at the agent’s queue and returns a view of their current workload. From there, the agent can ask:

“Help me prioritise this work.”

The system analyses the queue context and proposes an ordered set of tickets to focus on.

From there, things get more interesting.

The agent can then say, for example:

“Leave a client journal update on that incident, letting the user know we are working on the ticket and will revert.”

The AI:

All of this happens without the agent having to dig through records manually or switch between multiple screens.

Agents can also create new incidents and tasks through the assistant:

From there, the agent can say:

“For that incident, create three subtasks, all assigned to the database support team.”

The system creates the tasks and links them to the incident.

If the incident also needs network analysis, the agent can ask:

“What team could help us resolve any network issues?”

The AI finds the appropriate team, such as infrastructure support, and then creates a subtask for that team, with the context that initial investigation has already taken place.

This is a practical example of agentic AI doing structured work inside ITSM:

All from a conversational interface.

The agentic stack is not limited to incidents.

In a SecOps scenario, a user interacts with the SecOps assistant:

“Check the WordPress servers for any open vulnerabilities.”

Behind the scenes, the AI:

From there, the user can ask:

“Create a change request to fix these issues.”

The assistant and agents:

This is a strong example of agentic AI bridging multiple domains:

All inside Servicely’s permission and governance model.

Agentic AI is not just for end users and service desk agents.

Servicely also includes a code assistant that supports admins and developers who want to configure or extend the platform.

For example, you might ask:

“Create a server script that generates a record and an AI tool I can use to run that as part of an agent.”

The assistant:

In the demo, this was used to generate a report and visualise it as a bar chart, with the only manual step being “make this a bar chart and click run”.

In practice, this could extend to:

The end result is a faster path from idea to working automation, powered by AI but still under the control of your developers and administrators.

Throughout the session, many questions focused on safety, flexibility and enterprise controls.

Agentic AI in Servicely runs under the same permission model as the platform:

This means you can support advanced use cases like a “persona manager” for joiners, movers and leavers, where an assistant:

All within the guardrails you set.

The platform is LLM agnostic. Organisations can choose the model that best fits their strategy and compliance requirements, including:

You can also configure your own LLM endpoints where your subscription supports that.

The agentic tools can work on:

This allows scenarios like:

Agentic AI is not just another buzzword layered on top of chatbots.

Used well, it represents a shift from systems that simply capture and route tickets, to systems that actually do the work for your teams.

From the Servicely technical preview, a few clear themes emerge:

If you are exploring how to move beyond isolated AI pilots and into everyday, operational impact, agentic AI inside a platform like Servicely is a practical next step.

You can use this article as a starting point to brief stakeholders, link from your event follow-ups, or invite your team to see a deeper demo of the scenarios described here.